![]() PR is welcome.

PR is welcome.

The problem is that people disagree on what we should be minimizing. Time spent per card or deviation from desired retention? Both are desirable, but we need to choose one.

I think there’s some confusion. In that comment, the “score” refers to the value based on which the cards will be sorted. In my current proposal, the score is PRL + 0.1*R.

There, I was suggesting to try different values of stability and elapsed days and then checking what formula for the score results in the most reasonable order.

When I am dealing with a backlog, I don’t care about the true retention at all. I expect that it will be lower than the desired retention because I am not reviewing cards on time.

I am concerned with the number of days after which I will get back to normal schedule and also want to minimize the impact of the backlog on my total knowledge. So, I think that maximizing the total R at the end of the simulation period would be a good metric to judge sort orders.

You can say that I am biased towards my suggestion, but PRL + 0.1*R beats the current standard (descending R) in all the three metrics and also produces a sorting that seems more logical (see my previous comments for examples). However, I am not sure whether this simulation can be used to make an inference, i.e., whether these differences are statistically significant or not.

By the way, I tried some more combinations of S and elapsed days and I now think that PRL + 0.09*R is slightly better than PRL + 0.1*R.

Sorted by descending PRL + 0.09*R:

| Stability | Elapsed Days | R | PRL | PRL + 0.09*R | PRL + 0.1*R |

|---|---|---|---|---|---|

| 1 | 2 | 0.825 | 0.05890 | 0.1332 | 0.1414 |

| 1 | 3 | 0.766 | 0.04785 | 0.1168 | 0.1245 |

| 5 | 5 | 0.900 | 0.01663 | 0.0976 | 0.1066 |

| 5 | 8 | 0.853 | 0.01418 | 0.0909 | 0.0995 |

| 1 | 6 | 0.645 | 0.02928 | 0.0873 | 0.0937 |

| 20 | 20 | 0.900 | 0.00424 | 0.0852 | 0.0942 |

| 20 | 25 | 0.879 | 0.00396 | 0.0831 | 0.0919 |

| 50 | 52 | 0.897 | 0.00169 | 0.0824 | 0.0913 |

| 100 | 100 | 0.900 | 0.00085 | 0.0819 | 0.0909 |

| 100 | 150 | 0.860 | 0.00075 | 0.0782 | 0.0868 |

| 1 | 8 | 0.590 | 0.02266 | 0.0757 | 0.0816 |

| 50 | 100 | 0.825 | 0.00131 | 0.0756 | 0.0838 |

| 100 | 200 | 0.825 | 0.00066 | 0.0749 | 0.0832 |

| 20 | 50 | 0.794 | 0.00292 | 0.0744 | 0.0823 |

| 1 | 9 | 0.567 | 0.02024 | 0.0713 | 0.0769 |

| 50 | 160 | 0.756 | 0.00101 | 0.0690 | 0.0766 |

| 20 | 100 | 0.678 | 0.00182 | 0.0629 | 0.0697 |

| 20 | 150 | 0.602 | 0.00128 | 0.0555 | 0.0615 |

| 5 | 50 | 0.547 | 0.00379 | 0.0530 | 0.0585 |

| 5 | 100 | 0.419 | 0.00172 | 0.0394 | 0.0436 |

| 5 | 150 | 0.353 | 0.00103 | 0.0328 | 0.0363 |

| 1 | 50 | 0.280 | 0.00255 | 0.0278 | 0.0306 |

| 1 | 100 | 0.202 | 0.00096 | 0.0192 | 0.0212 |

Can I bring this up again? I feel sorting cards by descending PRL + 0.09*R is much better than the currently available options when dealing with a backlog.

I have described the problems with descending R, ascending R and descending PRL in Ordering Request: Reverse Relative Overdueness - #508 by vaibhav. The proposed sort order (descending PRL + 0.09*R) solves them and also beats the current standard (descending R) in all the three metrics in a small simulation performed by Expertium.

Looking at my simulation results above, it doesn’t seem to be that much better. Plus, its unintuitive.

I can’t disagree more regarding intuitiveness. You will be surprised to know how I obtained this formula.

I took about a dozen combinations of stability (interval) and elapsed days and then arranged them in the order that felt most intuitive. Then, I calculated the R and PRL for each. Then, I fed the data (my intuitive order, R and PRL) into o1 and asked it to use R and PRL for sorting in a way that produces the same order as mine. o1 generated the above formula. Then, I produced another dozen combinations of interval and elapsed days to see if the formula sorted those in a reasonable order too, and it did.

So, it is not like that I coughed up a formula and then saw that it produces good results. I did the reverse. I took the order that made the most sense and used it to generate a formula.

I dont care about intuition so long it is better. It was discussed before that Retrievability Descending would be made into “default”. I cant see what is wrong with adding a new, better sorting order, however marginal that improvement may be.

Alright, I’ll run a few more simulations

what if add-ons could come in help? a lot of people demand weird sort/gather orders (weird to me) and those will be solved too if add-ons could control how review/learning/new cards are gathered/sorted.

anyone knows anything about this?

Add-ons can’t change sorting because sorting is controlled by the Rust code. Even if they could, they won’t work on mobile.

I ran simulations at 80%, 90% and 95% DR. R (desc.) has better total_remembered and seconds_per_remembered_card than PRL+0.09R (desc.). In both cases retention is close to desired retention, so overall R (desc.) is better.

Total number of remembered cards: R (desc.) is better

Seconds per remembered card: R (desc.) is better

Closeness to desired retention: tied at DR=80% and DR=90%, at DR=95% R (desc.) is a little better

deck_size = 10000

learn_limit_perday = 20

review_limit_perday = 80

learn_span = int(deck_size / learn_limit_perday) (which is 500)

Default FSRS-5 params

DR=80%

DR=90%

DR=95%

Hey, is that difficulty_asc performing better at average_true_retention than others?

At DR=95%, R (desc.) is better. It achieves 0.950, D (asc.) achieves 0.939

At lower DR the difference is small, but retention with R desc. still technically gets closer to DR than with other sort orders

I got confused between asc/desc as you are changing the ordering of sort orders in these lists.

Oh, yeah, I probably should’ve sorted them

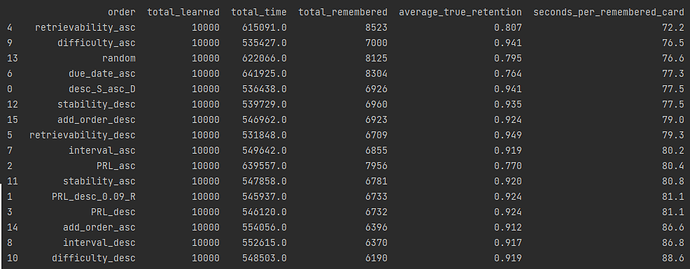

At the request of @DerIshmaelite, here are the results with a new sort order: S desc. D asc., which looks like this:

card["desc_S_asc_D"] = card["stability"] * (11 - card["difficulty"])

FSRS-6 with default parameters (note that previous simulations were using FSRS-5)

desired_retention = 0.95

deck_size = 10000

learn_limit_perday = 20

review_limit_perday = 80 (this will create a backlog)

learn_span = 500

Sorted by seconds_per_remembered_card

desc_S_asc_D is comparable to descending R. Descending R is slightly better at maintaining retention (94.9% vs 94.1%), but desc_S_asc_D is very slightly more time-efficient (77.5 vs 79.3).

Maybe for some people like DerIshmaelite it could be a better option. But good luck convincing Dae to implement it.